Context Engineering for AI Analysts

What if your AI Analyst understood your business as well as your best human analyst?

For decades, data teams have been the translators between business questions and data systems. Every insight began with a question such as “Why did revenue drop?” and required someone fluent in both business and SQL to find the answer. Then there were the tools, handoffs, dashboards, and meetings needed to answer the next question, “What should we do next?” It was time-consuming, subjective, and unreliable.

Today, a new teammate is emerging to close that gap and accelerate insights: the AI Analyst. It’s not a dashboard or a chatbot. It’s a conversational layer that allows you to talk to data, and find out what happened, why it happened, and what to do next. It’s the holy grail of analytics: the ability to talk to data and know how to act on it – no disjointed tools, static dashboards, or extra meetings.

Talking to an AI agent about your data promises to change the game for enterprise analytics, yet it’s not working. Research from MIT Sloan found that 95% of AI pilots fail in production, creating what I call the AI value chasm. So the question we started asking was, why?

To find out, we tapped into our most AI-forward customers, rolling up our sleeves and working alongside them to uncover the blockers to AI use cases. What we repeatedly saw in our workshops was that talking to data was one thing, but being understood was another. AI systems couldn’t fully understand or explain what happened, why it happened, and what to do next because they lacked the context needed to do so - the definitions, metrics, unwritten rules, and judgment calls that live in people’s heads.

The space between what AI Analysts know and what humans know, but may have not documented, is what we call the context gap. And it’s why, despite the hype, most AI analysts are failing in production.

But we’ve also seen what happens when the context gap is closed. Working with customers like Workday, we’ve achieved a 5x increase in response accuracy by engineering context into AI systems. It’s proof that being understood by AI, not just talking to it, is the key to tapping into the potential of enterprise-scale AI analysts. The question, and perhaps the future, for data teams is:

How to engineer context for AI Analysts.

From What Happened to What Next

AI Analysts aren’t replacing human analysts; they’re accelerating how humans reason with data, so they can make smarter decisions with it. They’re not just understanding what happened, and explaining why it happened, but actually suggesting what to do about it.

That final step relies on deep context – not just about data, but about goals, history, and patterns of decision-making specific to your organization. It’s the most complex element, and the most human — where AI and human analysts work together to turn shared meaning into action.

Moving from what to why to what next is not as simple as flipping a switch; it requires building a system.

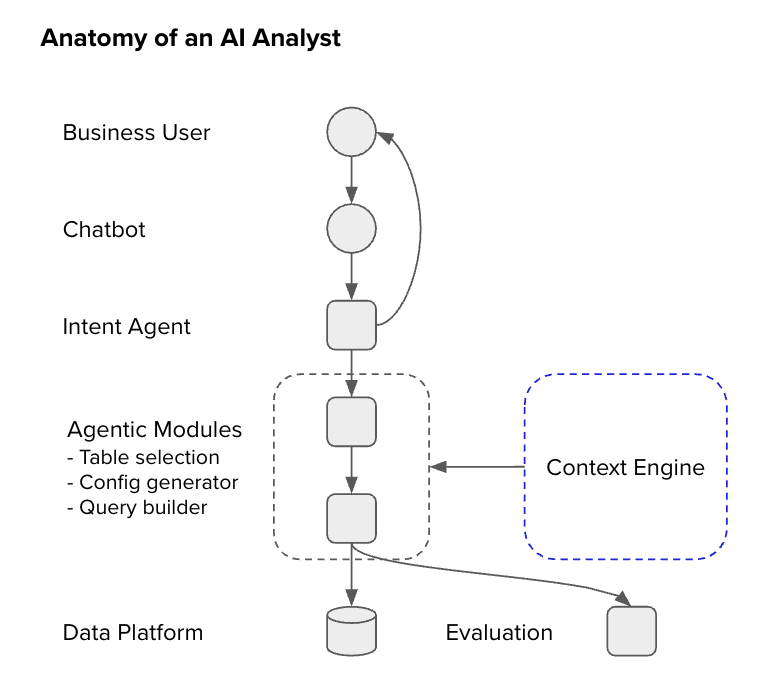

The Anatomy of an AI Analyst

Accuracy is the first, and arguably the most important, factor in deploying AI Analysts. It requires looking at what happens inside the system when the AI Analyst receives a question like, “What was our sales performance last quarter?”

A human analyst wouldn’t just pull numbers; they’d clarify what “performance” means, recall the right metric, find where it’s stored, and compute the result. The AI analyst mirrors that reasoning pattern through a series of steps:

1. Intent: Understanding what the user is asking and what you’re trying to find out.

2. Business Definition: Connecting intent to business meaning.

3. Data Definition: Mapping business meaning to actual data.

4. Response: Generating and validating the answer – not just responding, but actually explaining.

Context Engineering for AI Analysts

When an AI Analyst translates a natural-language question into an insight, its accuracy doesn’t depend on the size of the model, but on how well it understands the meaning behind your data. Context provides that meaning; it’s how an AI system knows that “performance” means revenue growth, that “churn” means inactive customers, and that these definitions may differ between Finance and Marketing. Without it, even the smartest model is bound to make mistakes.

The mandate for data teams, then, is to engineer context into models. Here’s how:

1. Identify Sources of Context

Every AI Analyst operates with two worlds of knowledge: the one it already knows, and the one it learns from you. The former is known as model context, and it’s the common language across industries that allows AI to reason logically. The latter is specialized organizational context, which translates your organization’s data definitions, goals, and semantics into machine understanding.

TL;DR: Model Context × Organizational Context = Real Understanding

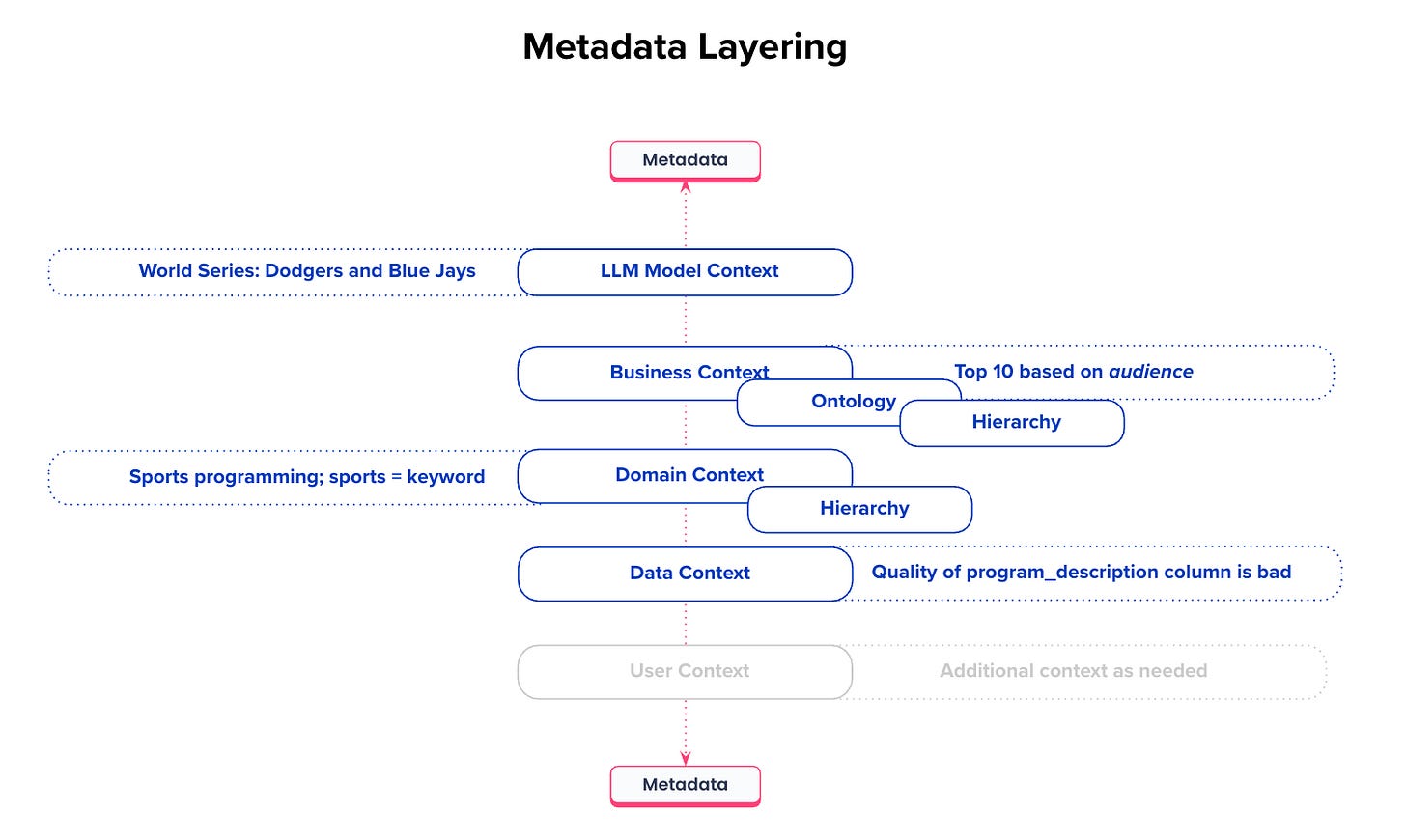

2. Layer Context to Create a Semantic Scaffolding

Every organization has layers of context that mirror how humans think about data. They create the semantic scaffold that enables the AI Analyst to reason accurately, grounded in organizational meaning.

The model context gives the system language and logic. But context about users, the business, domains, and the data itself localize that knowledge to your business.

3. Establish the Context Supply Chain

Just as data pipelines ensure data quality, a context supply chain ensures semantic quality. Consistency and correctness of meaning across systems. The context supply chain process unfolds as a living loop, not a one-time setup:

Bootstrap: Connect your existing metadata to form a base layer for organizational understanding.

Test for Readiness: Evaluate whether the AI Analyst can accurately answer key business questions.

Continuously Deploy and Observe: Monitor how the AI Analyst applies context — where it performs well, where it misinterprets intent, or where definitions are missing.

Enrich: Use insights from observation to refine definitions, relationships, and metric logic.

Validate and Scale: Re-test after enrichment, confirm improvements, and propagate verified context across domains and teams.

4. Continuously Refine Context

Like data, context decays over time: definitions evolve, new metrics emerge, and teams reinterpret goals. That’s why context engineering must be continuous. It thrives on a human–AI feedback loop that improves retrieval and accuracy over time. This system of continuous context enrichment makes organizational knowledge a living, evolving system that keeps up with the business.

5. Orient Data Teams Around Engineering Context

Context engineering introduces a new way of working. Instead of focusing solely on producing data assets, data teams begin to engineer shared understanding. This means:

Context is co-created by humans and AI.

It is tested and validated before being trusted.

It is monitored and refined through continuous observation.

It is scaled via metadata automation and governance.

Context is the intelligence layer that makes AI Analysts accurate and trustworthy. Engineering it is how you ensure that AI systems don’t just answer questions but understand them.

Context Engineering in Practice

To understand how context engineering works in the real world, imagine an executive asks: “How has customer satisfaction trended in Europe for our enterprise customers this year?”

It may seem like a simple question, something any data analyst could handle. But for an AI Analyst, it’s a multi-layered reasoning problem. It needs to translate natural language into precise data logic, align vague business terms with metrics, and ensure it’s using the right dimensions, filters, and relationships. This is done in a six-step process:

1. Dissecting the Question

Every natural-language query can be broken down into its core analytical components:.

Measures are business concepts that must be translated to a column or calculation.

Dimensions are the entities used for grouping and filtering results.

Filters are constraints applied to the corresponding dimensions.

This breakdown is the first step in translating human language into structured reasoning.

2. Translating Business Terms into Data Reality

A term like “Customer Satisfaction” isn’t a column name – and depending on the organization, it could mean different things:

Average NPS score (nps_score)

Percentage of satisfied customers (satisfaction_rate)

CSAT score from survey data (csat_score)

For the AI Analyst to select the right one, context must do the heavy lifting — pulling from business glossaries, column descriptions, and metadata relationships. Here, metadata richness is the difference between success and failure. Without a description or glossary mapping, “customer satisfaction” could have pointed to the wrong column.

3. Mapping Semantic Reasoning

Context engineering ensures that user intent connects to the correct data through multi-layered reasoning:

Semantic Similarity – Matches user terms against column names, glossary synonyms, and descriptions.

Data Matching – Uses sample values to resolve filters and ensure validity.

Relationship Validation – Ensures measures and filters originate from connected tables.

Aggregation & Temporal Logic – Applies correct calculation rules and time boundaries.

The final output goes beyond just a query, it’s a validated reasoning path from language to logic.

4. Incorporating Metadata

Because of the context it provides, metadata, especially column descriptions, is the connective tissue of context engineering. It provides the semantic anchor that lets the AI analyst interpret terms correctly. Without it, the AI has to guess, and guessing in data is just another form of inaccuracy.

5. Avoiding Mistakes

There are two key things not to do when implementing context engineering:

Don’t Dump Context into Prompts

A common anti-pattern is to embed all metadata, such as glossaries, SQL definitions, and lineage, directly into LLM instructions. This feels easy at first, but it breaks down fast as complexity grows, causing context rot, ambiguity collisions, and black boxes for debugging query failures.

Instead, context must live as structured, queryable metadata, not static prompt text. The AI analyst should retrieve the right context dynamically, pulling relevant definitions for each user query instead of memorizing everything.

Don’t Overfit to Vocabulary

It’s tempting to make the AI Analyst rely on exact name matches. But real data is messy. That’s why semantic similarity and data validation must coexist: language understanding for breadth, and data verification for depth.

Ultimately, the goal of context engineering is to ensure that the right column in the right table, with the right relationship, answers the right question. That means building rich semantic metadata continuously testing how well the system maps intent to structure, and treating every query not as an answer, but as a context test case that strengthens the next one.

This is what context engineering looks like in practice:

Dissect the question into its measures, dimensions, and filters.

Use metadata and semantic matching to translate business language into data logic.

Validate relationships and aggregations through structured lineage.

Continuously enrich and version the context layer, not the prompt.

In the end, an AI Analyst is only as intelligent as the context that surrounds it. And that context isn’t written in prompts, it’s engineered.

Taking AI Analysts From Vision to Reality

If most AI Analysts are failing in production, we need to take another look at what organizational context we’ve given them, and acknowledge whether it’s enough. Because if AI Analysts are really going to become valuable, functional parts of the enterprise, getting context right is non-negotiable.

In this new paradigm, the most valuable skill isn’t writing SQL or tuning models, it’s engineering context. And that requires a human touch. The AI Analyst can reason, but only within the boundaries of the context it’s given. Humans, acting as context engineers, define those boundaries and improve them over time.

Together, they form a continuous feedback loop of understanding: AI accelerates reasoning; humans refine meaning. And with that, the promise of AI can finally become reality.

The Insight Index: Your Weekly Data & AI Digest

Top resources and recommended reads, carefully curated for you.

Semantics in Data Modeling — Joe Reis

The Most Expensive Mistake in Data Architecture? Confusing Run Cost with Total Cost of Ownership(TCO) — Himanshu Gaurav

Context Engineering as a Discipline: Building Governed AI Analytics — Tobias Macey & Nick Schrock

Grounding LLMs: The Knowledge Graph foundation every AI project needs — Alessandro Negro

Knowledge Graphs and Ontologies: Beyond the Dictionary Fallacy — Nicolas Figay

A Decade of AI Platform at Pinterest — David Liu

Is Context Aggregation The Real AI Battleground? — Dave Rothschild

The Missing Line Item in Your 2026 AI Budget: Context Infrastructure — Prukalpa Sankar

That’s all for this edition. Stay curious, stay contextual. See you all soon in the next one!

Metadata Weekly isn’t just a newsletter. It’s shared community space where practitioners, builders, and thinkers come together to share stories, lessons, and ideas about what truly matters in the world of data and AI: trust, governance, context, discovery, and the human side of doing meaningful work.

Our goal is simple, to create a space that cuts through the noise and celebrates the people behind the amazing things that are happening in the data & AI domain.

Whether you’re solving messy problems, experimenting with AI, or figuring out how to make data more human, Metadata Weekly is your place to learn, reflect, and connect.

Got something on your mind? We’d love to hear from you. Hit Reply!

I thought this was a super interesting/relevant read - thank you! Out of curiosity, how do you (and other readers) think about the idea that "context must live as structured, queryable metadata?" This seems like the key point to me, but training the AI analyst to be able to access this info dynamically feels like a non-trivial task to me.